In July 2025, a report from MIT‘s Project NANDA dropped a statistic that sent shockwaves through the tech world: 95% of enterprise AI initiatives are delivering zero measurable return. Despite $30 to $40 billion in investment, the vast majority of AI pilots are stalling, failing, or quietly getting shelved.

The report immediately sparked a huge debate. AI doomers had the perfect statistic to herald the inevitable AI crash they‘d long foreseen, and AI evangelists quickly hit back, criticizing the study‘s methodology as deeply flawed. Paul Roetzer, CEO of the Marketing AI Institute, dismissed the study as “not a viable, statistically valid thing.“ Others went further, accusing the researchers of pushing their own agenda.

It seems that everyone‘s blaming the technology. I don‘t think they should be.

Table of contents

The 95% problem (and why the number doesn‘t matter)

Let‘s get the controversy out of the way. Yes, the MIT study has limitations: the sample size was small, the definition of “zero measurable return” was strict and narrow, and some critics have valid points about the methodology.

But even the harshest critics aren‘t saying AI pilots are succeeding in droves. They‘re just arguing about the extent of how badly they‘re failing.

And research backs it up. A McKinsey survey found that while 65% of organizations regularly use generative AI, only one-third have successfully scaled it across the enterprise. Similarly, S&P Global found that the average organization scrapped 46% of its proof-of-concept generative AI projects.

The MIT stat may be aggressive, but the direction is consistent across every major study I‘ve reviewed: most AI pilots aren‘t delivering.

So what‘s actually going wrong?

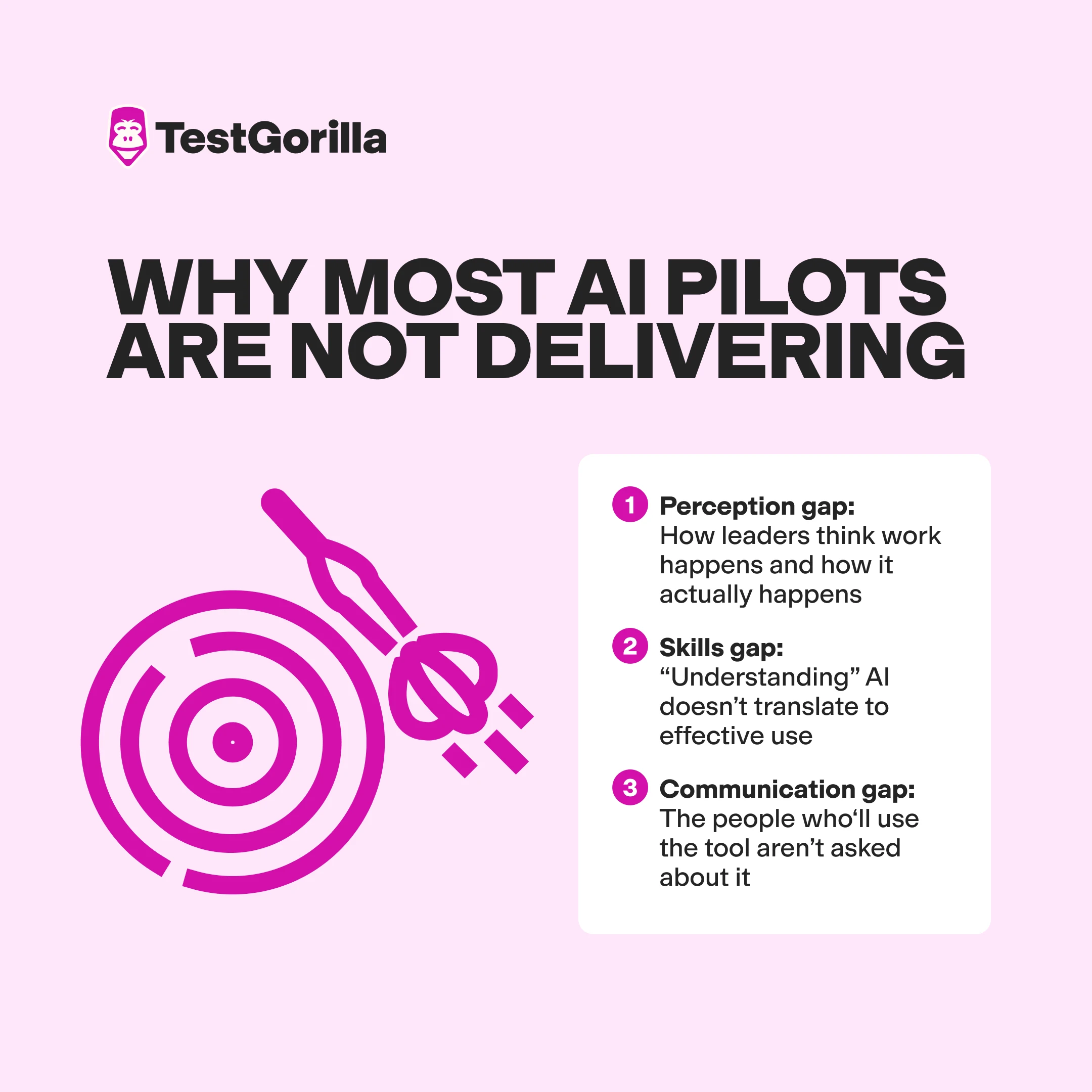

The real reason AI pilots are failing

Common explanations focus on technology – immature models, poor data quality, or complex integration. These factors do matter. But I don‘t believe they‘re the root cause.

Experts say the real issue is organizational. AI Strategist Megan Clarke explains:

“Pilots [are] being treated as technology experiments rather than organizational change initiatives.

“Leaders become excited about the technology, thinking that it alone will solve business challenges, and rush to prove value without first defining strategy, stabilizing the processes, or identifying the meaning of success beyond the pilot.”

It seems organizations are deploying AI faster than they understand how their own work gets done. Let’s explore this.

The perception gap: How leaders think work happens and how it actually happens

When I managed software development for a call center, leadership would mandate tools based on flashy demos and vendor promises. But the people doing the actual work lived in a different reality – one full of workarounds, exceptions, and on-the-job knowledge that never made it into any process document.

The same thing keeps happening with AI. Leaders see a polished demo and jump at the chance to beat the competition to the punch. But when these tools are hastily deployed, frontline teams are baffled about how they fit into workflows that leadership doesn't even know exist.

We spoke to Chris Kirksey, founder and CEO of Direction.com, and he described exactly this disconnect:

“Typically, leaders do not understand which tasks would benefit most from AI improvements…A lack of transparency surrounding how work is accomplished, the skill level of employees, and the interdepartmental workflow process hinders the success of AI initiatives.”

The skills gap: “Understanding” AI doesn’t translate to effective use

“While employees may know how to use the AI system, they may not have the ability to take action on the insights, so even sophisticated AI fails to achieve desired results,“ explains Kirksey.

Leaders may assume that because employees can access the tool, they can use it productively. That‘s like assuming someone can write a novel because they own a word processor.

Correct AI use requires understanding context, recognizing when outputs need verification, and knowing when a tool is the wrong choice entirely. Most organizations haven’t even defined what “good” AI use looks like, let alone measured whether their people can do it.

A 2025 study examined AI in project management and found efficiency gains of 15–20% were possible, but only when organizations provided clean, structured data. Most don‘t. The study concluded that “the main barriers to the widespread introduction of AI...are not technical, but rather organizational and psychological.“

The communication gap: The people who‘ll use the tool aren’t asked about it

I came across a thread on r/ExperiencedDevs that captured the chaos of a poorly planned AI rollout. One software engineer described a nightmare scenario: “Prod is on fire 24/7,“ they wrote. Management was pushing AI adoption from above, but the engineers actually doing the work were drowning in AI-generated code that needed constant cleanup. The tool was technically functional, but the implementation was a disaster.

Another exasperated data scientist described becoming “part LLM babysitter,” constantly checking AI outputs for errors.

These aren’t isolated complaints. They happen when AI tools are deployed without input from the people doing the work. The original MIT report found that 70% of users said AI tools don’t learn from feedback, and 60% complained that too much manual context is required each time – clear signs that organizations haven‘t figured out how to integrate AI into human workflows.

Research supports this. Studies of AI adoption in government agencies found most suffered from “solutioneering“ – a rush to adopt technology without understanding the organizational changes, legal risks, or long-term maintenance required. Frontline workers knew why certain processes existed, but that knowledge never made it into the project plan.

Similarly, a 2025 study of AI implementation in banking found that even technically perfect systems were rejected by users because analysts couldn‘t explain AI decisions to clients or regulators.

The researchers summed it up: “The failure of AI-based systems in professional contexts is often not due to technical flaws but to a lack of alignment with the complex activity systems they are meant to support.“

In other words, when frontline workers aren’t consulted, their practical knowledge gets overlooked, and the technology fails to fit the reality of how work actually happens.

The best insights on HR and recruitment, delivered to your inbox.

Biweekly updates. No spam. Unsubscribe any time.

Your organization can stop AI pilots from failing: Here’s how

Based on my experience and conversations with experts, there are several things that separate the successful pilots from the duds. The organizations that succeed with AI do things differently. Not with better technology, but with better preparation. And your organization can do it too.

Redesign work before you deploy tools

“Successful pilots redesign work first, not last,“ Clarke told us. “They engage frontline staff early, clarify ownership, and explicitly define new ways of working alongside AI. Just as importantly, they invest in change management and learning as core deliverables and not afterthoughts.“

This principle isn‘t new. It‘s how any technology finds successful adoption. AI just makes the stakes higher because the potential for both value and disruption is greater.

The MIT research at the center of this debate reinforces this. They found that mid-market companies were implementing AI pilots in about 90 days, while enterprises took nine months or longer. The difference wasn‘t resources or technical sophistication. It was organizational agility: the ability to redesign workflows without getting stuck in committee.

Involve frontline workers before launch, not after

One pattern I see in successful pilots is that they treat frontline expertise as essential input, not an afterthought. The people doing the work every day understand things that never make it into process documentation: why certain exceptions exist, which workarounds are load-bearing, and where the real bottlenecks hide.

Researchers who studied AI adoption in public sector agencies found that deliberation with frontline workers before development began was one of the strongest predictors of success. When their input was ignored during the pilot's design, the tools ended up being “technically sound but practically useless.”

The researchers emphasized that deliberations should include the possibility of deciding not to build a proposed tool – something that rarely happens when decisions are made exclusively at the leadership level.

Frame AI as a partner, not a replacement

I‘ve noticed that when people believe AI is coming for their jobs, they resist it. When they see it as backup, they embrace it.

We asked Roman Rylko, CTO at Pynest, what successful human-AI collaboration actually looks like in practice. He said it‘s when “AI is seen as a partner who takes away routine and data collection.“ In those cases, the team feels less that management is trying to quietly replace them with AI tools, and more that they’re being offered useful tools that help them.

Companies seeing real results position AI as a tool that supports human judgment, not replaces it. This framing matters more than most leaders realize.

This means being explicit about which decisions should remain with humans, celebrating when AI handles tedious work so people can focus on higher-value tasks, and talking about AI as something that makes good employees better, not as something that makes employees unnecessary.

Create psychological safety for experimentation

“Successful pilots focus on psychological safety and permission to play,“ explains Joshua Ness. “This isn‘t easy to achieve because it forces leaders to dismantle the organizational fear that AI is a replacement tool.“

A 2025 study of human-AI collaboration in HR supports this. Researchers found that “organizational resistance“ was a top barrier to AI adoption – not because people were technophobic, but because they hadn‘t been prepared for the shift.

The study concluded that “successful adoption hinges on addressing ethical concerns and ensuring organizational preparedness...Sustainable collaboration requires enhancing digital competence and developing robust governance frameworks.”

In practice, this means teams need permission to experiment, room to fail without consequences, and the belief that learning to work with AI is an investment in their future – not an ominous sign pointing to their redundancy.

Give non-technical leaders real oversight tools

Here‘s a finding that surprised me. A meta-analysis of more than 220 AI governance tools found that 90% were built for developers. The people who actually own the business risks – legal teams, HR leaders, and executives – had almost nothing to help them oversee AI after launch.

This matters because it means no one ends up owning the AI outcomes once the pilot goes live. When problems emerge, there‘s no clear path to fix them. I‘ve watched this play out repeatedly: a pilot launches with fanfare, the technical team moves on to the next project, and six months later, someone notices the AI is producing questionable results. Who‘s responsible? Nobody knows.

The researchers noted that responsibilities are “often ambiguously defined and assigned, leading to confusion, miscommunication, and inefficiencies.“

Organizations that succeed are the ones that decide up front who owns the outcomes of AI initiatives and give those people the right tools to monitor them.

How to stop AI pilots from failing: Focus on people and skills

All the organizational changes above won’t matter if your people can’t work effectively with AI. The companies getting AI right don’t just focus on flashy tech – they understand what their people can actually do.

This means moving beyond job titles and self-reported experience to measuring real capabilities: Can this person critically evaluate AI outputs? Can they adapt their workflow when tools change? Do they understand the downstream impacts of their decisions?

Why AI readiness is hard to assess

Here's the problem: most organizations rely on weak signals to assess AI readiness. Everyone puts “proficient with AI tools” on their resume, and interviewers ask vague questions like “Have you used ChatGPT?” to gauge proficiency. But those signals reveal almost nothing about whether someone can actually work with AI responsibly and effectively.

“I‘ve used ChatGPT“ doesn‘t tell you if someone can spot hallucinations, whether they’ll blindly trust outputs that need human review, or show if they understand when AI is the wrong tool for the job.

This challenge isn’t limited to AI. According to TestGorilla‘s research, 63% of employers said it was harder to find great talent in 2025 than in 2024. If organizations struggle to assess skills during hiring – when they‘re actively trying to evaluate candidates – how can they realistically assess the AI-readiness of their existing workforce?

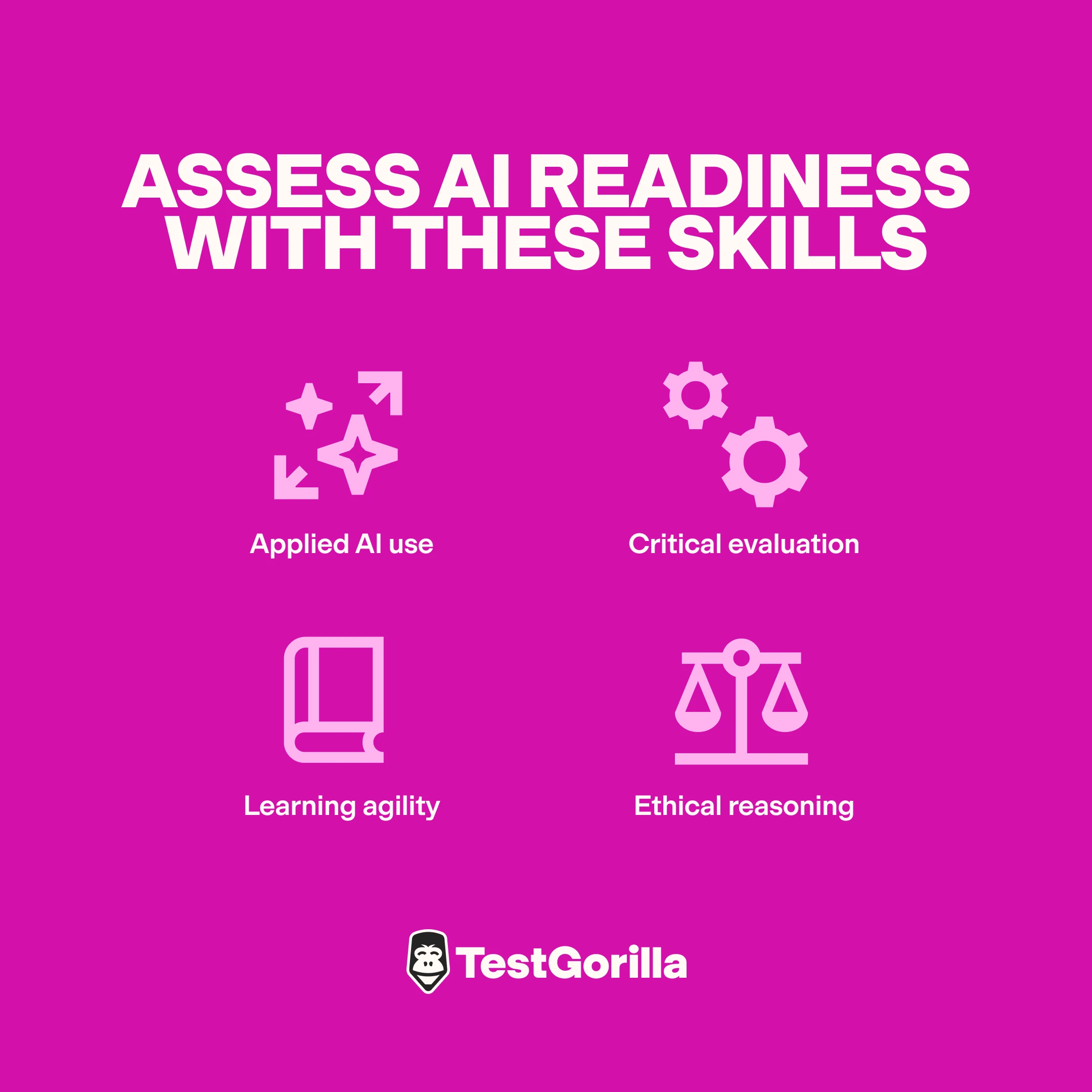

Test for the skills that actually matter

Assessing AI readiness isn’t out of reach. In fact, the capabilities that drive AI success are specific and measurable:

Applied AI use: Not just familiarity, but the ability to apply tools to real work problems.

Critical evaluation: Knowing when AI outputs need verification and having the judgment to catch errors.

Learning agility: Adapting as tools and workflows evolve, often rapidly.

Ethical reasoning: Understanding the stakes of AI-assisted decisions, especially in high-impact contexts.

These aren‘t abstract concepts. They‘re observable behaviors – and they can be assessed.

Bank on evidence, not assumptions

These are the facts: If you don't know which of your people can work effectively with AI, your pilot will struggle. If you can‘t identify the capability gaps, you can‘t close them. And if you‘re guessing about readiness, you‘re gambling with outcomes.

Many AI failures originate at the hiring stage. Organizations bring people in based on resumes and interviews, only to discover months later that those hires can’t work effectively with AI tools. By the time the pilot stalls, the capability gap is already baked into the team. Fixing it retrospectively is expensive. Hiring for it upfront isn’t.

That’s why many organizations are turning to skills-first platforms like TestGorilla that use AI-powered hiring tools to evaluate whether people have the capabilities to work effectively and responsibly with AI.

Research found 34% of employers using skills-based hiring report being “very satisfied“ with their hires, compared to just 18% of those who don‘t. Evidence-based evaluation simply produces better results than guessing.

AI pilots succeed when you invest in people, not just platforms

Whether 95% of AI pilots fail may be debatable. The underlying problem isn‘t.

AI transformation means transforming your workforce. Before your next AI pilot, ask the questions that actually matter:

Do we really know how work gets done in this organization?

Do we know what skills our people have?

Do we know who‘s ready to work with AI, and who needs support?

Have we involved the people who‘ll actually use this tool in shaping how it gets deployed?

Are our new hires AI-fluent?

If you can‘t answer those questions, you‘re not ready. And no amount of investment in platforms will change that.

Contributors

Chris Kirksey, Direction.com, Founder & CEO

Roman Rylko, Pynest, CTO

Joshua Ness, Senior AI Strategy Advisor

Megan Clarke, King County, CIO

You've scrolled this far

Why not try TestGorilla for free, and see what happens when you put skills first.