The State of Hiring for AI Fluency 2026

71% of organizations have defined AI fluency. 95% list it as a hiring requirement. And 59% have still made a bad AI hire. We surveyed ~2,000 senior hiring leaders to find out why their candidates sound fluent in the interview but can't deliver on the job.

01 The Highlights

Here’s a quick summary of this report's findings.

01.1

01.1

The AI paradox: High stakes, low hits

The year is 2026, and the hiring market is witnessing a tectonic shift toward a new kind of talent:

53% of hiring managers now prefer a candidate with AI fluency over one with deep domain expertise.

That’s a huge change. In creating this report, we surveyed ~2,000 experts in the hiring industry, and despite this priority shift, 59% of those same organizations report having made a "bad AI hire," i.e., someone who successfully navigated the interview but failed to perform on the job. We are facing a "Grand Canyon-sized gap" between what organizations say they need (applied capability) and what their processes are actually built to detect (vocabulary and confidence).

01.2

01.2

Why your AI hiring processes are failing

Our report reveals a stark "Infrastructure Paradox." Organizations are trying hard: 71% have formally defined AI fluency, and 95% list it as a requirement. But these systems are crumbling because they rely on the same broken proxies we’ve used for decades:

The "Awareness" Trap: 37% of organizations set their minimum bar at "tool awareness", or simply, knowing that a tool exists. As we often say, we all know chainsaws exist, but that doesn't mean we trust the person in the interview to use one without losing a hand.

The Subjectivity Trap: 19% of organizations leave AI assessment entirely to the individual discretion of hiring managers. Without a shared rubric, "fluency" becomes a subjective "vibe-check" that rewards the best storyteller, not the best hire.

Confidence vs. Competence: Current interviews are designed to observe communication, not execution. A candidate can learn the language of "agentic workflows" and "RAG" in a weekend, but that doesn't mean they can audit an output or redesign a workflow.

01.3

01.3

The structural divide: US vs. UK

The data shows a clear divergence in how these tensions play out globally.

33% of US organizations report frequent AI-driven errors, compared to just 13% in the UK. The difference is upstream: US organizations are more likely to set the bar at mere "awareness" (45%), while the UK is more likely to require independent use and verification.

In short, the US has a conviction problem; the UK has a capacity problem.

01.4

01.4

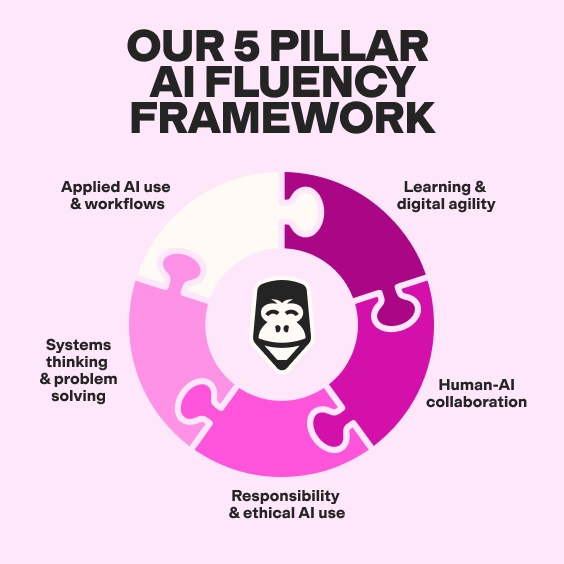

The TestGorilla 5-Pillar Framework

To close the gap, we must move from declarative signals to verifiable evidence. The report introduces our proprietary framework designed to measure metacognition, or how a candidate thinks, not just what they can name.

Applied AI Use & Workflows: Can they actually execute end-to-end tasks?

Learning & Digital Agility: Can they adapt when the tools (inevitably) change?

Systems Thinking & Problem Solving: Do they understand how their AI use ripples across the team?

Responsible & Ethical AI Use: Do they have the judgment to catch a hallucination before it becomes a lawsuit?

Human-AI Collaboration: Can they document and communicate their AI logic to a teammate?

01.5

01.5

The bottom line: Moving from description to demonstration

Hiring for AI fluency isn't a knowledge state; it's a behavioral pattern. The path forward is simple but disciplined: Change the question. Stop asking which tools they use; start asking them to walk you through a workflow they redesigned, what broke, and how they fixed it.

The organizations that move from "vibe-based" hiring to evidence-based verification are the ones that will win the AI era.

Are you ready to stop hiring the best storyteller and start hiring the best performer?

Then let’s get started.

02 The AI paradox: High stakes, low hits

The year is 2026. Your LinkedIn feed is full of AI engineers, GTM and marketing engineers, AI-powered product managers, and posts about how Claude, n8n, Clay, and a host of other impactful tools have changed how companies build products and go to market.

02.1

02.1

“AI fluency” is the new buzzword, one we claimed 12 months ago, would be the next big thing. And organizations are trying hard to hire for it. In a survey of ~2,000 senior hiring leaders across the US and UK, 53% of hiring managers stated they now prefer a candidate with high AI fluency over one with deep domain expertise. That is a significant priority shift. Most organizations have defined what AI fluency means for their teams. Many have built internal measurement criteria. Almost all list it as a formal requirement in their hiring processes.

And yet more than half of those same companies - 59% to be precise - have still made a bad AI hire. Someone who spoke the language in the interview, named the tools, described the workflows, and then, once through the door, couldn't apply any of it to the job.

That tension between how hard organizations are trying and how poor the outcomes remain is visible throughout this report, as we look more closely at AI-related decision-making criteria and the thresholds organizations actually use. What those tensions are, how they affect hiring decisions, and how they might be addressed differently is what this report aims to tackle.

of hiring managers now prefer a candidate with high AI fluency over one with deep domain expertise

of those same organizations have still made a bad AI hire

This is not a new failure mode in hiring. Hiring has leaned on the same proxies for decades: years of experience, pedigree across companies and universities, confident interview answers, and the right keywords on a resume. And for decades, the research has quietly been telling us that those proxies do not work (see TestGorilla's State of Skills-Based Hiring 2025). A widely-cited meta-analysis by Van Iddekinge and colleagues found that the correlation between years of experience and actual job performance was roughly 0.06, effectively zero. Yet these signals remain the default inputs into most hiring decisions, not because they predict performance, but because they are easy to collect, easy to compare, and easy to feel confident about.

AI fluency is now being filtered through that same broken funnel. And the requirement for it has made this gap more acute, more visible, and more critical to address.

The skill organizations say they need is an applied one: integrating AI into real workflows, exercising judgment over outputs, adapting as tools and constraints shift. But the signals hiring processes are built to capture are different in kind. They are declarative — what the candidate knows, what they have used before, how fluently they can describe it in a thirty-minute conversation. The result is predictable. Candidates who articulate AI well are rewarded; candidates who apply it well may or may not be, because the process has no reliable way to distinguish between them. And once the wrong candidate is hired, the consequences compound: slower execution, inconsistent output, and misplaced confidence in AI-generated work that no one on the team is equipped to audit.

Consequences of a mis-hire

Slower execution

Inconsistent output

Misplaced confidence in AI-generated work

The gap, then, is not about effort or intention. It is about evidence. Specifically, about the kind of evidence current hiring processes are built to collect, and why that evidence cannot tell you what you actually need to know. Until that changes, the paradox holds: rising investment in AI-fluent talent, alongside falling confidence that the process is finding it.

03 Hiring for AI fluency’s Grand Canyon-sized gap

The tension described above is not one TestGorilla identified in isolation. In February 2026, we convened a group of talent leaders, assessment scientists, and hiring practitioners for a virtual event called "Hire for the AI Era," which drew over 1,000 registrations. The conversations were candid, and several were uncomfortable, which is usually a sign that something important is being said.

03.1

03.1

Wouter Durville, TestGorilla's co-founder and CEO, put the core tension plainly: "95% of companies that are claiming to use AI fluency as a hiring factor are still struggling with how to actually do it."

Isabel Berwick, the Financial Times journalist whose coverage of the future of work has drawn millions of readers, offered a structural diagnosis: "Too many companies use blanket, non-differentiated AI screening processes to reduce the candidate pool from unmanageable to just about manageable. Many good people are lost along the way."

Hung Lee, curator of Recruiting Brainfood and one of the most closely followed observers of the talent acquisition industry, identified the underlying cause: "The terminology that people are using right now hasn't really caught up to describe what AI fluency actually means. The laggards tend to use 'AI-literate' or 'AI-fluent' as a generic bucket to say: we need someone to help us get there. But they don't know what that looks like themselves."

95% of companies that are claiming to use AI fluency as a hiring factor are still struggling with how to actually do it

Three people, three different vantage points, one shared diagnosis: the gap between what organizations say they are hiring for and what they can actually identify and measure is widening, not closing.

To understand why, and to build something more useful than another list of observations, we surveyed nearly 2,000 senior hiring leaders across the United States and the United Kingdom. 56% identified as senior leaders, or senior decision-makers within hiring - the people shaping hiring processes in real time. The sample spanned organizations hiring across one to 250-plus roles per year, across industries including technology, healthcare, finance, consulting, and education, and across hybrid, remote, and on-site teams.

We asked a focused set of questions: how organizations are defining AI fluency today, how they are measuring it, where those processes break down, and what actually happens once candidates join. We also drew on the expert conversations from the February event, and on a selection of external research, including frameworks from Zapier and the Microsoft Work Trend Index.

The findings that follow reflect a market in the middle of working something out. There are no clean answers yet in a technology whose fundamentals and use cases are evolving faster than the speed of light, but there are clearer questions, and a framework for thinking about them more rigorously than most hiring processes currently allow.

By the end of this report, you will have:

A practical, nuanced understanding of how to define AI Fluency for your specific use case.

A thorough framework created and used by TestGorilla to implement in your AI hiring practices that allows you to identify truly AI-fluent talent across all roles.

Snapshot of the Survey

people surveyed across the US and UK

identified as senior leaders or senior decision-makers within hiring

questions covering how organizations define, measure, and evaluate AI fluency in their hiring processes

industries represented, from technology and healthcare to finance, consulting, and education

annual roles are hired by these organizations

industries sampled, including technology, healthcare, finance, consulting and education

04 Why your AI Fluency methodology isn’t working

04.1

04.1

The infrastructure is in place. The signal is broken

Organizations have not been passive in the face of this challenge.

71% of our respondents’ organizations have formally defined what AI fluency means for their teams

50% have built an internal measurement criterion

95% list AI competency as a stated hiring requirement.

Organizations have not been passive in the face of this challenge

71% of our respondents’ organizations have formally defined what AI fluency means for their teams

71%

50% have built an internal measurement criterion

50%

95% list AI competency as a stated hiring requirement

95%

By most measures, this looks like a system that is working. It isn't. And the reason is instructive.

Building a definition and building a measurement are two different things. A definition tells you what you are looking for. A measurement tells you whether you found it. Most organizations have done the former while believing they have done the latter.

The infrastructure is real, but it is measuring the wrong inputs. And infrastructure that measures the wrong thing does not produce better outcomes. It produces more confident wrong ones!

We explore 3 tensions worsened by the gap between hiring for AI fluency requirements, infrastructure, and signals.

04.2

04.2

Tension 1: A definition is not a measurement. Most organizations have confused the two.

71% of organizations have formally defined AI fluency. 50% have built internal measurement criteria to go with it. On paper, this represents a serious institutional commitment to getting AI hiring right.

And yet 59% of those same organizations have made a bad AI hire.

The Apollo program took 11 missions before landing on the moon, not because of a lack of ambition or investment. It nearly failed, repeatedly, because the complexity being managed had outgrown the tools designed to manage it. And it needed iterations - from Apollo 4 being unmanned, to Apollo 7 being the first manned mission into space - before getting it right.

Hiring has often leaned on superficial signals. When you cannot directly observe whether a candidate will perform well in a role, it’s natural to look for indicators that may correlate with performance:

A degree from a credible institution

Years of relevant experience

A confident answer to a technical question

But these are not measures of capability. They’re just signals we’ve assigned a mark of quality that might yield a higher-quality candidate.

The problem with these signals is that they erode. We’re building Apollo 11 using the same rules from Apollo 1 - it won’t likely make it to outer space, forget the moon.

These are just proxies. And as the research on hiring proxies consistently shows, proxies feel more reliable than they are. The degree requirement felt reliable. The years of experience felt reliable. The confident interview answer felt reliable. None of them predicted performance with any meaningful consistency. AI fluency, filtered through the same process, is producing the same result.

A candidate who has never built an AI workflow in a professional context can, with modest preparation, describe one convincingly. They can name the right tools, frame the right trade-offs, and deploy the right language. An interview rewards articulation, but it was never designed to test execution.

Jason Miller, Head of People Intelligence and AI at Natera, put it with characteristic directness at our February event: "Putting ChatGPT on your resume is the equivalent of saying proficient in Microsoft Office. We are getting to a point where there are pre-established table stakes, and no one has really figured out yet how much AI we allow in an interview, or how to cut through the performance."

Putting ChatGPT on your resume is the equivalent of saying proficient in Microsoft Office. We are getting to a point where there are pre-established table stakes, and no one has really figured out yet how much AI we allow in an interview, or how to cut through the performance

04.3

04.3

Tension 2: 37% of organizations set the bar at tool awareness. The job requires something else entirely.

The 59% failure rate we see in AI hiring isn't an accident; it’s a structural inevitability. Consider this - when we asked organizations where they set the minimum standard for AI fluency, 37% said tool awareness: knowing which AI tools exist and where they might broadly apply. That is not a standard for fluency. It is a standard for exposure.

This problem is amplified by a lack of standardization.

Currently, 19% of organizations leave AI fluency assessment entirely to the individual discretion of the hiring manager. 31% of whom say they struggle to distinguish between candidates who "understand the tech" and those who just "use the terminology". Without a shared, science-backed rubric, evaluation defaults to a subjective impression. The candidate who presents with the most confidence wins, regardless of what they can actually deliver on day one.

This means that a third of managers essentially know they are being fooled by "storytellers," yet 19% still use a "vibe-based" interview, suggesting they are (knowingly or unknowingly) inviting bias into the system.

The distinction matters because what organizations say they need from AI-fluent hires bears no relationship to what a tool-awareness threshold actually screens for. Efficiency gains, productivity improvements, and revenue impact (the outcomes organizations consistently cite as the measures of AI performance) require judgment, verification, and the ability to adapt as conditions shift. None of those capabilities is visible from a list of tools a candidate can name.

When you hire a skilled prompt writer, you get excellent prompts. That’s not nothing. But it is not a measure of the outcomes organizations are trying to drive. A candidate who has built genuine fluency has not just written good prompts - they have used AI to redesign how work gets done, caught errors before they caused damage, and made judgment calls about when not to use it at all. The threshold needs to reflect that, not the lowest version of it that still counts as engagement with AI.

Only 26% of organizations currently require candidates to demonstrate independent AI use and verify results as part of the hiring process. That number should be the floor. Currently, it functions as the ceiling.

Organizations currently requiring candidates to demonstrate independent AI use and verifying results as part of the hiring process

26%

74%

04.4

04.4

Tension 3: The current interview structure finds the best storyteller. Not the best hire.

The third tension sits inside the interview itself, or the one part of the hiring process that most organizations have not fundamentally changed.

An interview is designed to observe communication. What a candidate says, how they frame it, and how confidently they hold a position under questioning. It is not designed to observe execution. And for AI fluency specifically, the gap between those two things is where almost all bad hires originate.

Going deeper requires changing what the interview is designed to surface. Three things make a material difference.

The first is a structured evaluation framework: one that gives every interviewer specific, scoreable dimensions before the conversation begins, rather than relying on general impression afterward.

Lou Adler, CEO of Performance-Based Hiring, describes the discipline of going narrow and deep rather than broad and shallow: "I ask the most significant accomplishment question. What's the most important thing you've ever done? It takes 15 to 20 minutes to fully understand what that person did, their role, how they organized, who was on the team, what the projects were." Applied to AI fluency, that translates directly: have you used AI to redesign a workflow? What changed? What broke? What did you verify? What would you do differently? Those questions cannot be answered convincingly by someone who has not actually done the work.

I ask the most significant accomplishment question. What's the most important thing you've ever done? It takes 15 to 20 minutes to fully understand what that person did, their role, how they organized, who was on the team, what the projects were.

The second is role simulation: Asking a candidate to walk through how they would approach a specific, realistic task using AI, with a constraint introduced mid-way, surfaces adaptation in real time. The strongest candidates reframe and adjust. Candidates who are just performing stall, repeat vocabulary, or describe what they would theoretically do rather than demonstrating how they actually think.

The third is a collective debrief structured around shared evidence: As Lou Adler observes, "You get everybody in the interviewing room, and you debrief collectively, and you start sharing that evidence. And actually, the truth comes out. By everybody sharing the evidence, you see things you didn't think you actually heard." Individual interviewers are susceptible to confident performance. A structured debrief using shared, specific evidence is considerably harder to deceive.

The interview, as currently structured, is not designed to observe metacognition. Romina Da Costa, TestGorilla's Director of Talent and Assessment Science, frames the underlying principle with precision: "What we get from a psychometric lens is that we are not really looking at the output (what did they create with AI) but that metacognitive process of how they got to that output, and why they defend it as the best output given the constraints of time and resources and what they had in front of them. And how did they adapt and evolve, because the technology isn’t standing still. That is the key differentiator."

Redesigning the interview is the difference between a process that reliably produces confident wrong hires and one that finds people who can actually do the work.

Going deeper in the interview

Provide a structured evaluation framework

one that gives every interviewer specific, scoreable dimensions before the conversation begins, rather than relying on general impression afterward

Role simulation

Asking a candidate to walk through how they would approach a specific, realistic task using AI, with a constraint introduced mid-way, surfaces adaptation in real time

A collective debrief structured around shared evidence

Individual interviewers are susceptible to confident performance. A structured debrief using shared, specific evidence is considerably harder to deceive

04.5

04.5

Outcome: The wrong hire’s impact doesn't stay in the interview room

33% of US organizations report that a team member's over-reliance on AI has led to an error in the last six months. In the UK, that figure is 13%. Twenty percentage points, two markets operating in the same global economy, hiring for the same kinds of roles, in the same technology environment.

So what is the UK doing differently? Quite a lot, as it turns out. And most of it happens before a single interview takes place.

Start with definitions. 71% of US organizations have formally defined AI fluency, compared to 72% in the UK. Virtually identical. Both markets have made the commitment on paper. But when you look at what those definitions are actually anchored to, the divergence starts to show.

Defining AI Fluency In US and UK

Struggle to define AI fluency across role types

Unclear expectations for AI use in roles

Minimum bar set at tool awareness

Struggle to define AI fluency across role types

Unclear expectations for AI use in roles

Minimum bar set at tool awareness

62% of US hiring managers cite the challenge of defining AI fluency differently for technical versus non-technical roles, compared to 46% in the UK. Paradoxically, the US is more aware of the complexity of the problem and less equipped to resolve it. 57% of US organizations report a lack of internal alignment on how much AI augmentation is actually required in a given role, versus 41% in the UK. Awareness of a problem, it turns out, is not the same as having solved it.

Where the gap becomes most concrete is in the threshold data. In the US, 45% of organizations set the minimum bar for AI fluency at tool awareness, or simply knowing what tools exist and where they might broadly apply. In the UK, that figure is 29%. British organizations, by contrast, have a meaningfully higher concentration, setting the bar at independent use and verification: the standard that actually requires a candidate to demonstrate applied judgment rather than simply name the right tools. One market is screening for vocabulary. The other is screening for capability.

Among organizations that have not defined AI fluency at all, the failure modes diverge further. In the US, 29% say they do not need a definition, while a further 34% say they struggle to define it. In the UK, only 22% say they do not need a definition, while 47% say they simply have not gotten around to it yet.

Put plainly, the UK failure is a capacity problem. The US failure, in a significant number of cases, is a conviction problem. Organizations that have decided the question is not worth answering are not going to stumble into better hiring outcomes.

None of this is abstract. Set a lower bar, with less internal alignment, in a market that is more likely to treat the definition question as already resolved, and you get 33% of organizations experiencing AI-driven errors often. Raise the bar, acknowledge what you have not yet done, and that figure drops to 13%.

Garbage in, garbage out is a principle most technology teams understand intuitively. It applies equally well to hiring. The quality of the standard set upstream determines the quality of the outcome downstream. That relationship is not theoretical. It is measurable, and it is measured here.

05 AI fluency. Harder to define than “situationship”

Irish playwright George Bernard Shaw once said: "If all the world’s economists were laid end to end, they still would not reach a conclusion.” Similarly, if you ask ten hiring managers what AI fluency means, you will get ten defensible answers.

That is not because the question is unanswerable. It is because the market has adopted a term without agreeing on a definition, and everyone has quietly filled the gap with their own.

This matters more than it might seem. A shared vocabulary is not a nicety. It is the foundation on which measurement is built. When the term means different things to different organizations, the rubric cannot travel, the benchmark cannot hold, and the hire that looks right on paper looks wrong on the job.

Our survey clearly captures this fragmentation. When we asked hiring managers where they set the minimum threshold for AI fluency, the answers did not cluster. They scattered.

The bar-setting data from our survey reveals four distinct operating standards, each describing a fundamentally different expectation of what an AI-fluent candidate actually is.

Four different bars. All operating under the same “AI fluency” label.

In a fast-moving technology environment, terminology will always lag behind capability. The Microsoft and LinkedIn 2025 Work Trend Index found that 75% of knowledge workers now use AI at work, with adoption nearly doubling in six months.

The vocabulary used to describe that usage has not kept pace with the behavior itself.

05.1

05.1

One term. Every function. Completely different problem.

Fragmentation across organizations is one problem. Fragmentation within them is another, and in some ways a harder one to solve. The challenge of defining what fluency looks like for a non-technical role versus a technical one was identified by 54% of hiring managers as a primary hurdle.

This represents the single highest-ranked challenge in our data. It significantly outranks other common industry concerns:

The pace of evolution: 35% cited the fact that definitions change as fast as the tools themselves.

Terminology vs. Understanding: 31% struggle to distinguish candidates with true understanding from those who simply use the correct vocabulary.

Lack of benchmarks: 31% noted the absence of clear industry standards to measure team proficiency against the market.

0 %

The pace of evolution: 35% cited the fact that definitions change as fast as the tools themselves.

0 %

Terminology vs. Understanding: 31% struggle to distinguish candidates with true understanding from those who simply use the correct vocabulary.

0 %

Lack of benchmarks: 31% noted the absence of clear industry standards to measure team proficiency against the market.

The difficulty is real and structural. AI fluency for a software engineer looks like chaining model calls with fallback logic and building evaluation tests to catch hallucinations. For a revenue operations or marketing leader, it looks like architecting multi-step AI agents that orchestrate outreach, scoring, and follow-up across a pipeline, as well as knowing where human judgment needs to stay in the loop before a prospect is touched. For an HR professional, it looks like redesigning screening workflows and using AI to reduce bias in job description language.

Different tools. Different risk profiles. Different vocabulary. But the underlying behaviors - judgment over outputs, ethical awareness, the ability to communicate AI use to colleagues who need to understand or audit it - remain the same across all three.

Most organizations have defined AI fluency at the tool level. A tool-level definition cannot cross functional lines. Which means the 54% who cannot define it across functions are failing because they started from the wrong end of the problem. And unsurprisingly, in the UK, 47% of those who haven’t defined AI fluency yet say they have “not gotten round to it yet,” not due to indifference or confusion, but capacity. The intention exists. The bandwidth does not.

Defining AI fluency at the tool level

54%

47%

05.2

05.2

The ROI of getting it right

The organizations that have invested in a clear, working definition of AI fluency report immediate and measurable returns.

0 %

say it helps them upskill existing employees more effectively

0 %

say it makes it significantly easier to find and hire AI-fluent talent

0 %

say it allows them to set clear AI expectations across their teams

These are not abstract benefits. They are operational advantages that compound over time. A clear definition makes every downstream process more consistent and more predictive: job description writing, screening, interviewing, onboarding, and performance management.

06 The state of AI fluency frameworks

Several serious attempts to define and measure AI fluency have emerged in the last two years. Worth noting: their existence is itself a signal.

06.1

06.1

IBM's framework, published in 2025, distinguishes three tiers: AI-aware (understanding concepts and implications), AI-proficient (applying AI to specific job tasks), and AI-expert (designing and governing AI systems at scale). Built from the experience of retraining a global workforce of over 250,000 people, its most useful contribution is the insistence that fluency is role-relative. An AI-proficient legal professional and an AI-proficient software engineer are not expected to look the same. The underlying behavioral expectations may be comparable. What they demonstrate them through is not.

IBM's framework

AI-aware

Understanding concepts and implications

AI-proficient

Applying AI to specific job tasks

AI-expert

designing and governing AI systems at scale

Zapier has published not one but two AI fluency frameworks in the first quarter of 2026 alone. That pace is worth pausing on. In a technology environment where the tools and their capabilities are shifting faster than most job descriptions can track, even organizations that have invested seriously in defining fluency are finding that their definitions require updating.

Zapier's frameworks structure assessment around AI Mindset, Strategic Acumen, and Technical Skills, with a maturity progression from Unacceptable through Capable and Adoptive to Transformative, where Transformative means AI has fundamentally changed not just how someone works, but how the people around them work. It is a deployable framework, particularly for organizations at an early stage of AI hiring maturity. Its scope, by design, does not extend to systems thinking, ethical judgment, or the collaborative behaviors that determine whether individual fluency scales into organizational impact.

Both frameworks reflect the same underlying recognition: tool lists are insufficient, behavioral dimensions are necessary, and the definition needs to survive the next model release. Neither claims to have closed the problem. Both have moved the conversation forward.

“Tool agnostic” is an emerging conversation. A framework built on specific tools has a shelf life measured in months. A framework built on the behaviors that make someone effective with any tool, be it judgment, adaptability, ethical reasoning, or collaborative transparency, survives the next model release and the next wave of capability only when combined with actual AI skills.

Romina Da Costa, TestGorilla's Director of Talent and Assessment Science, framed the design challenge precisely at our February event: "We advocate for taking a really broad and holistic view of AI fluency. It is not a singular thing. The most lasting skills go beyond the mastery of any single tool into something that is completely tool agnostic."

We advocate for taking a really broad and holistic view of AI fluency. It is not a singular thing. The most lasting skills go beyond the mastery of any single tool into something that is completely tool agnostic

Where the TestGorilla framework extends further (which we’ll introduce in a moment) is in the dimensions that determine whether individual AI fluency scales into organizational impact. The systems thinking, ethical judgment, and collaborative behaviors that allow AI use to be understood, audited, and built upon by others.

These are the dimensions our survey data identifies as hardest to assess, and the ones most directly linked to the failure modes described earlier.

06.2

06.2

The TestGorilla Framework: 5 Pillars of AI Fluency

This is a proud parent moment: We’re excited to share with you the very same framework we use every day at TestGorilla to properly assess AI-fluent talent.

This framework was developed by TestGorilla's own Talent and Assessment Science team, grounded in IO psychology research, validated against job performance data, and designed to generate observable signals across technical and non-technical roles alike.

Each pillar describes a distinct behavioral domain. Together, they define what it means to be AI-fluent in a way that is specific enough to measure, broad enough to travel across functions, and durable enough to remain valid as the tool landscape evolves.

06.3

06.3

But first, a bit of pillar-talk

Before introducing the pillars, one question that arises consistently deserves an honest answer: “Which pillar matters most?”

Good question. And our answer is the same as those annoying responses your parents used to give you as a kid: “It depends."

That dependency is a feature rather than a bug. Tom Booth, Talent Lead at payments fintech Primer, spoke to this directly at our February event: "Company size changes the emphasis quite meaningfully. At earlier stages, the bias is typically toward experimentation and speed. As companies grow, the systems thinking side and the downstream impact of AI use become more important."

Jason Miller, Head of People Intelligence and AI at Natera, working in a regulated healthcare environment, placed responsible and ethical AI use at the top of his list: "If we use AI and it mishandles patient data, or it hallucinates test results, that is not a little bit of egg on our face. That is a lawsuit."

This framework is meant as a diagnostic tool, not a fixed hierarchy. The right weighting depends on your industry, your organizational stage, and the specific role. What the TestGorilla 5 Pillars do, however, is ensure that no critical dimension gets quietly skipped.

Company size changes the emphasis quite meaningfully. At earlier stages, the bias is typically toward experimentation and speed. As companies grow, the systems thinking side and the downstream impact of AI use become more important

06.4

06.4

Pillar 1: Applied AI use and workflows

What it measures:

The hands-on, technical dimension of fluency.

The ability to select the right tool for a specific task.

Decompose work into AI-suitable components.

Execute real work end-to-end using generative AI.

Explain the trade-offs and risks involved.

Critically, it includes knowing when to automate, when to augment, and when to step back from AI entirely.

The ability to select the right tool for a specific task

Decompose work into AI-suitable components

Execute real work end-to-end using generative AI

Explain the trade-offs and risks involved

What AI-fluent looks like in practice:

An engineer who can chain LLM calls with fallback logic and build evaluation tests to flag hallucinations before they reach production.

A marketer who can run AI-assisted A/B tests and audit copy for demographic bias.

A customer success manager who can use AI to synthesize ticket patterns and surface systemic product issues, not just close individual tickets faster.

What this pillar guards against:

The “Confidence vs Competence Problem,” i.e., the candidate who can describe AI tools accurately but cannot operate them under real conditions.

This is the most common false positive in current hiring. Detecting it requires moving beyond theoretical questions into scenario-based tasks that mirror actual job workflows.

What our survey reveals:

For 31% of hiring managers, a primary challenge is distinguishing candidates who truly understand AI from those who just use the right terminology.

06.5

06.5

Pillar 2: Learning and digital agility

What it measures:

Tool “agnosticism”. The meta-skill of staying current across many tools, not just one specific platform.

The ability to learn new AI workflows quickly, adapt when tools or constraints change, experiment and iterate based on outcomes, and help teams evolve their practices over time.

What AI-fluent looks like in practice:

When a tool a candidate relies on is deprecated or updated, they do not wait for retraining. They identify an alternative, test it against their existing workflow, document what changed, and share the updated approach with their team.

What it guards against:

The candidate who has mastered a specific platform but cannot transfer that mastery when the platform changes.

Given that the Microsoft and LinkedIn 2025 Work Trend Index found AI adoption nearly doubled in six months, the half-life of tool-specific knowledge is short. Learning agility is what converts today's investment in AI-fluent talent into long-term returns.

How practitioners test for it:

Tom Booth described his team's approach at our February event: "We might ask someone to answer a question or build something in a live interview. Then, once that is complete, we throw in a requirement change, take away a tool, or introduce a constraint. The stronger candidates do not just tweak the output. They step back, reframe, and adjust their whole approach."

06.6

06.6

Pillar 3: Systems thinking and problem solving

What it measures:

If the candidate uses AI effectively just in their own lane, or whether they understand how their AI use ripples across the organization.

The ability to frame ambiguous problems

Decompose a role into its AI-suitable and human-essential components

Reason about the downstream consequences of AI decisions across teams, data pipelines, and users.

The ability to frame ambiguous problems

Decompose a role into its AI-suitable and human-essential components

Reason about the downstream consequences of AI decisions across teams, data pipelines, and users

What AI-fluent looks like in practice:

A product manager who, before deploying an AI-generated feature recommendation, asks:

Who consumes this output?

What assumptions are embedded in the model?

What happens downstream if the recommendation is wrong?

What is the blast radius if it goes wrong at scale?

And then designs a human-in-the-loop step into the workflow accordingly.

What this pillar guards against:

The individually productive hire who does not scale.

This is precisely the failure Tom Booth described: “A candidate who 10x'd their own output but produced documentation so light that no one else could follow it, and who bypassed security and compliance processes in the process. Individual AI productivity that cannot operate within a system is not a productivity gain. It’s a liability.”

What our survey reveals:

23% of hiring managers specifically flag difficulty assessing cognitive skills like systems thinking and verification of AI output. This pillar is the hardest to detect in a standard interview and the most consequential to miss.

06.7

06.7

Pillar 4: Responsible and ethical AI use

What it measures:

The behaviors that make AI adoption sustainable at scale:

Identifying bias, privacy constraints, and governance requirements before they become incidents.

Documenting safeguards and decisions.

Knowing when a human must be in the loop.

Understanding the compliance and regulatory requirements specific to the industry and the data being worked with.

Identifying bias, privacy constraints, and governance requirements before they become incidents

Documenting safeguards and decisions

Knowing when a human must be in the loop

Understanding the compliance and regulatory requirements specific to the industry and the data being worked with

What AI-fluent looks like in practice:

A recruiter who, before deploying an AI screening tool, asks:

Has this model been audited for demographic bias?

What data was it trained on?

What is our process for candidates who are screened out?

And: Who documents the answers so the organization can demonstrate compliance if the process is challenged?

What this pillar guards against:

The fast mover who creates slow-burning liability. This is also a validity signal. If a candidate can't audit an output for bias or hallucinations, they lack the "systems thinking" required for senior roles i.e. an awareness of the overall context and implications of AI use, downstream impacts on data security, things like compliance with EU AI acts, SOC2, reputational damage, etc.

Organizations that prioritize execution speed without ethical guardrails do not avoid consequences. They only defer them and find out they come back to bite later.

What our survey reveals:

Responsible and ethical AI use ranks as the most frequently cited #1 skill that is looked for in candidates. Ahead of even applied AI use and workflows. 20% of respondents ranked it as the single most important pillar overall.

Interestingly, practitioners are ahead of job descriptions on this. The market has not yet caught up to what hiring managers already know they need.

Isabel Berwick, writing for the Financial Times and speaking at our February event, made the broader case: "In an agent-led future, our human work will be much more about collaboration, innovation, and ethical judgment. That human capacity is going to be needed more than ever."

06.8

06.8

Pillar 5: Human-AI collaboration and communication

What it measures:

The ability to treat AI as a genuine teammate, to communicate prompts, assumptions, and outputs transparently.

Candidates who can create clear handoffs that allow hybrid human-AI workflows to function reliably across a team rather than just in one person's hands and to document how AI was used so colleagues can review, learn, and build on it.

What AI-fluent looks like in practice:

A data analyst who does not just produce an AI-assisted report but documents which inputs were used, which outputs were verified manually, where AI judgment was applied and where human judgment overrode it. All so that the next person who picks up the work can understand, audit, and extend it without starting from scratch.

What this pillar guards against:

The AI fluency silo.

A highly capable individual who cannot communicate their AI use creates knowledge concentration rather than knowledge distribution.

The organizational multiplier effect of AI fluency only compounds when it is transferable. When it is not, it stops at the individual and leaves when they do.

How practitioners assess it:

A talent leader for a large Dutch tech company described at our February event: "In tech assessments, candidates can use AI as long as they justify and show the use. What are the prompts they are using? How are they using it? Feel free to use AI, as long as you can explain it and the logic behind it." The output matters. The transparency about how the output was reached matters more.”

06.9

06.9

What this framework does that buzzwords cannot

Taken together, the 5 pillars shift the hiring question from "do you know AI?" to three harder, more useful ones:

These are not interview questions. They are evaluation dimensions. And they require a different kind of evidence than a thirty-minute conversation can produce.

When we asked nearly 750 hiring managers to rank the 5 pillars in order of importance, the answers were almost uniformly distributed. No pillar dominated. No pillar was ignored.

That finding explains a pattern visible elsewhere in the data. Hiring managers are not unaware of the full picture. 49% report a lack of internal stakeholder alignment on how much AI augmentation a given role actually requires. 54% cannot define fluency consistently across technical and non-technical functions. When internal agreement is out of reach, organizations default to the one dimension that requires no negotiation: tool awareness. It is not that they believe tool awareness is sufficient. It is that tool awareness is the only thing everyone in the room can agree on.

The framework provides the vocabulary to assign that weight rigorously rather than by default.

07 Three things worth doing before this report leaves your desk

The findings in this report describe a market that is trying hard and measuring badly. The fix does not require rebuilding your entire hiring process. It requires making different decisions at specific points and being honest about which signals you are currently trusting that you probably should not be.

07.1

07.1

Change the question: Get specific, measure against the framework

Change the question. Stop asking candidates which AI tools they use. Start asking: walk me through the last workflow you redesigned using AI. What changed? What broke? What did you verify? One question, replacing one question. It costs nothing to change today, and it immediately surfaces execution over vocabulary.

But changing the question is the beginning, not the end.

To do this rigorously, you need to know which questions and tasks are testing which pillars, how you are weighting those pillars for this specific role, and what a strong answer actually looks like against each one. That is a structured evaluation framework, applied before a single candidate enters the process. It requires deciding, in advance, whether systems thinking carries more weight than applied tool use for this hire, whether ethical judgment is non-negotiable or developmental, and what evidence you will accept for each dimension.

That is real preparation work. We are not going to pretend otherwise.

Which is exactly why point two matters as much as point one.

07.2

07.2

Give your hiring team their time back: The right early screening tools

Give your hiring team their time back. Right now, your recruiters are managing volume, not making decisions. Hundreds of AI-generated applications that are impossible to differentiate on paper. First-round interviews follow the same script. Screening has become administration. This is not evaluation but a process that consumes human judgment on tasks that do not require it, and withholds it from the ones that do.

If you want your hiring team to do the preparation that a structured AI fluency assessment actually requires, they need to not be buried in the screening layer. Use AI to handle that layer so your team has the time and headspace to make the calibrated judgment calls that only humans can make, on the conversations that actually reveal something.

The preparation is the work. Automation is what makes the preparation possible.

07.3

07.3

Pilot one role differently: Start with 1, then slowly make a systemic change

Pilot one role differently. Pick one open role. Not your most important hire, not a complete overhaul, just one role where you introduce structured, science-backed screening alongside what you already do. Skills tests that go beyond the resume. An AI fluency assessment for roles where it matters. A structured evaluation that creates consistency across every candidate rather than relying on whichever hiring manager happens to be in the room. Run it, track the outcomes, and compare the hire quality. The organizations that have done this do not go back.

The gap between how hard organizations are trying to hire for AI fluency and how poorly the outcomes are reflecting that effort is a measurement problem. Processes being used currently were designed to capture something different from what AI fluency actually requires (in fact, some of them are not even relevant for hiring today), and optimizing them at the margins will not close that gap.

Closing it requires moving from signals that are easy to collect to evidence that actually predicts performance. From descriptions of AI capability to demonstrations of it. From a definition that lives in a document to one that generates observable, auditable signals at every stage of the hiring process.

07.4

07.4

Change the question

Get specific, measure against the framework

Give your hiring team their time back

The right early screening tools

Pilot one role differently

Start with 1, then slowly make a systemic change

08 Final Word: What this report was built to do

This report has been purpose-built to highlight the problem and the framework. But with TestGorilla, you can go so much deeper in your AI hiring processes. We provide the measurement infrastructure hire for AI-fluent talent at scale.

Our proprietary AI fluency assessments are built directly on the 5 Pillars described earlier. They move beyond multiple-choice theory into scenario-based tasks, behavioral evaluations, and structured scoring that generates consistent, auditable signals across technical and non-technical roles alike.

Every one of our 350+ assessments is benchmarked against expert ratings, not just AI output. Every score is explainable. There are no black boxes spitting out an arbitrary number, but instead, you get a thorough breakdown that gives you clear, dependable answers.

Additionally, one single AI Readiness Interview (taken by an AI avatar) measures a candidate on all pillars of the framework. Being customizable, TestGorilla’s own team had deployed this interview’s framework in a role-specific question set for a successful hire.

For organizations ready to move from vibe-based hiring to evidence-based hiring, the starting point is not a new vocabulary. It is a new kind of evidence.

The candidates who can deliver with AI, be trusted with it, and bring others with them are out there. The question is whether your hiring process is designed to find them.

08.1

08.1

Consistent, auditable signals across technical and non-technical roles

Scenario-based tasks

Behavioural evaluations

Structured scoring

09 About TestGorilla

TestGorilla is a skills-based hiring platform helping organizations find and hire the right people - faster, fairer, and without the bias of CVs. With 350+ science-backed assessments, 100+ AI interviews, resume scoring, and role simulations. TestGorilla gives hiring teams everything they need to evaluate talent on what actually matters: proven ability.

As of December 2025, TestGorilla has been working with companies to help them identify and hire AI-fluent talent and develop scientifically-backed tests and interviews to identify role-specific AI fluency.

Read more about our proposition.

Identify AI fluency assessments by selecting the category.

Or reach out to us to put together a role-specific assessment comprising skills tests and interviews.

10 Sources

TestGorilla, 'The State of Skills-Based Hiring 2025', testgorilla.com (June 2025)

Microsoft and LinkedIn, '2025 Work Trend Index Annual Report', microsoft.com (April 2025)

Tracy St.Dic, 'Zapier's AI-First Hiring and Onboarding', zapier.com (June 2025)

Tracy St.Dic, 'Raising the AI Fluency Bar for Every Zapier Hire', zapier.com (April 2026)

Ready to hire for AI fluency?

Assess candidates on the skills that actually predict performance.